Too far on AI

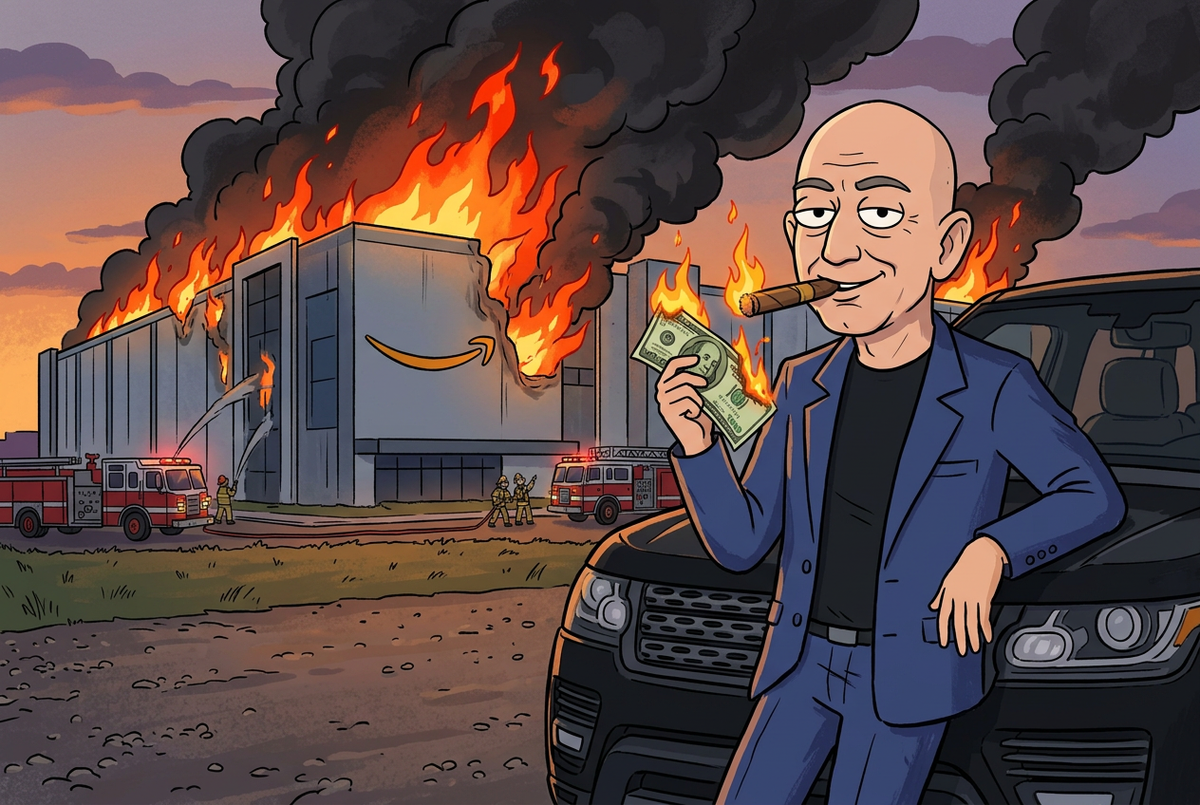

Executives are getting antsy that their HUGE investments in AI are not translating into measurable revenue or productivity gains. So they are pushing harder with more potential adverse effects.

I was long a sceptic about the use of generative AI in the office. Not because it isn't useful, because for many tasks – tasks that it is well suited for – it can be brilliant, and provide a force multiplier for a worker. But mostly I was skeptical because it creates a false sense of security, and a that you are better than you are. But it is impacting the workforce in many subtle ways.

One place this is evident is that the number of people who are getting jobs out of college is dropping precipitously. That first gig, whether it is in marketing, cranking out boiler plate copy, or an "associate" attorney who does a lot of research of cases and related summarizing, or the entry level investment banker, cranking out mundane reports on market trends, these are important roles. And yes, I will concede that AI can do all these things well, just handing them to the mid to senior level people to polish and publish or consume.

My problem with this is that while it gets the job done, it is eviscerating the pipeline for the young people who are needed to climb the career ladder and one day be mid career, and perhaps even a grizzled veteran like myself.

Nowhere is this more disturbing that in the world of writing software. As is blasted at us ad nauseam, one of the things that these AI tools are pretty good at is writing code. I mean, they are LLMs, or "Large Language Models", and writing software is writing instructions in a language. In fact, these languages have very rigid rules, and those rules are easy for these LLM's to write.

Add to that that they've been trained on beaucoup samples of code, from open source repositories, to Stack Overflow postings, to, well a lot of shit they probably didn't have rights to.

The Valley has been going all in on using AI to write code. Hell, my own company is touting that 70% of our new software is not being written by humans, with a goal to get that close to 100%. There's nothing dangerous there, right?

Alas, senior executives are using AI tools to augment the junior and early in career developers, encouraging them to write more complex solutions than they are really ready for, and that the AI assistants (like Microsoft's Copilot) can make them more capable.

But it ain't all roses and unicorns. Today in the Financial Times I saw this headline:

Article here: https://www.ft.com/content/7cab4ec7-4712-4137-b602-119a44f771de

Turns out that mashing the pedal to the metal on AI isn't so hot after all:

The online retail giant said there had been a “trend of incidents” in recent months, characterised by a “high blast radius” and “Gen-AI assisted changes” among other factors, according to a briefing note for the meeting seen by the FT.

"high blast radius"? That is juicy, Junior, juicy.

What exactly has been happening? Apparently the drive to use more and more AI tools, by lesser skilled people who don't really understand the implications of their changes, being pushed to production without a critical eye from someone who really gets the big picture, and and does lead to "oopsies". Oopsies that take down key parts of their public facing functionality, and causes some heartburn, mostly for support who has to deal with the fallout.[1]

Under “contributing factors” the note included “novel GenAI usage for which best practices and safeguards are not yet fully established”.

“Folks, as you likely know, the availability of the site and related infrastructure has not been good recently,” Dave Treadwell, a senior vice-president at the group, told employees in an email, also seen by the FT.

Huh, "novel" GenAI usage. You mean the directives to use the fuck out of it, and that we are monitoring your usage, so you better be hitting that bong of GenAI often to keep the buzz going sort of novelty?

Look, I don't work there, but I bet that all the senior execs are pushing hard on their organizations to increase the use of the AI tools that they are building and paying for (Amazon has their own set of tools) and the junior sw engineers are just doing what they are told.

Junior and mid-level engineers will now require more senior engineers to sign off any AI-assisted changes, Treadwell added.

Ok, so, you are going to overtax your senior staff to babysit the junior and mid level engineers, because their use of AI is causing issues. How many of these senior people do you have? How many reviews can they realistically do? Is this use of AI really going to increase velocity?

Snort.

Separately, the company’s cloud computing arm — Amazon Web Services — has suffered at least two incidents linked to the use of AI coding assistants, which the company has been actively rolling out to its staff.

AWS suffered a 13-hour interruption to a cost calculator used by customers in mid-December after engineers allowed the group’s Kiro AI coding tool to make certain changes, and the AI tool opted to “delete and recreate the environment”, the FT previously reported.

Wait, they had a downtime on their cost calculator?? Now the bean counters are going to go ballistic.

This wouldn't be so surprising had there not been many rounds of layoffs in corporate, because, AI something or something...

Amazon has undertaken multiple rounds of lay-offs in recent years, most recently eliminating 16,000 corporate roles in January. The group has disputed the claim that headcount cuts were responsible for an increase in recent outages.

Do you think they let those junior people go and kept those senior engineers who are needed to provide those code reviews before pushing to prod?

Neither do I.

Late addition

Dedicated reader KG provided a link to a Reddit thread on this that is entertaining to read: Amazon holds engineering meeting amongst AI related outages

Just delish!

1 - sites like amazon.com are not simple, they are constructed of an ecosystem of micro services, that are orchestrated to deliver on a component. For example, when you look at a product listing, there are sub-systems that match the description, the images, the reviews, the pricing, any alternative ways to buy, all working in concert. Some of those may fail, and it doesn't prevent Amazon from making the sale, but it does impact people's use of the site. A Jethro Tull song comes to mind: "Nothing is Easy" from the album Stand Up if you want a deep pull.